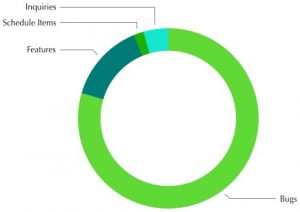

We’ve been using Manuscript (originally Fogbugz) to manage the project, starting during the first week of development in August 2014. Since then, the team and playtesters have created 3412 cases. This breaks down into Manuscript’s categories as:

123 Inquiries. This includes playtester feedback and comments, and 75 completed games. I try to do some analysis of each game, to learn where players get stuck and to make sure things are tuned OK. Once in a while this uncovers bugs.

123 Inquiries. This includes playtester feedback and comments, and 75 completed games. I try to do some analysis of each game, to learn where players get stuck and to make sure things are tuned OK. Once in a while this uncovers bugs.

485 Features and 61 Schedule Items. These represent tasks like like “Map Creation,” “iPhone X support” or “Sweep to be sure ChooseLeader is followed by a leader test.” The two categories are pretty similar, but a Feature would probably be passed to QA to check, and a Schedule Item could usually just be marked completed.

2714 Bugs. These are things that didn’t behave as expected. They’re typically fixed, then verified by QA as working correctly. Since we added playtesters over time, we tended not to get a lot of duplicate bugs (though it’s never a problem if we do, since a different report may give insight into reproducibility, and they’re easy to verify).

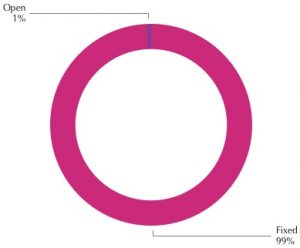

Overall, we closed 3313 of the 3412 cases. Of the 99 cases not closed, 26 are feedback that I was keeping handy (and probably should close to clean up the project). Most of the rest are issues that we deferred as part of the triage process, (see part 1) or as features that would be nice to have in an update.

2695 of 2714 bugs have been closed. A few are still being verified as fixed, or were deferred for an update. (That’s about 1.8 bugs per day over the entire project.)

2695 of 2714 bugs have been closed. A few are still being verified as fixed, or were deferred for an update. (That’s about 1.8 bugs per day over the entire project.)

I don’t really like managing purely by numbers (the way a really large project might have to), but it’s good to see that our gut feeling that the game is solid is also backed by data.

(part 1 of the series on bugs is here)

Speaking of features, will the game have cloud syncing across devices?

Just saw it in the App store for pre-order…

Do you plan to add a “report bug” feature on launch?

And do you have some other “fun” bugs ? In terms of gameplay or story, not code 😀

Yes, it’s hidden but a 2-finger tap will enable a Report button.

Posted a few #BugOfTheDay on Twitter.